Compare commits

54 commits

zeronet-en

...

py3-latest

| Author | SHA1 | Date | |

|---|---|---|---|

| 7edbda70f5 | |||

|

|

290025958f | ||

|

|

25c5658b72 | ||

|

|

2970e3a205 | ||

|

|

866179f6a3 | ||

|

|

e8cf14bcf5 | ||

|

|

fedcf9c1c6 | ||

|

|

117bcf25d9 | ||

|

|

a429349cd4 | ||

|

|

d8e52eaabd | ||

|

|

f2ef6e5d9c | ||

|

|

dd2bb07cfb | ||

|

|

06a9d1e0ff | ||

|

|

c354f9e24d | ||

|

|

77b4297224 | ||

|

|

edc5310cd2 | ||

|

|

99a8409513 | ||

|

|

3550a64837 | ||

|

|

85ef28e6fb | ||

|

|

1500d9356b | ||

|

|

f1a71770fa | ||

|

|

f79a73cef4 | ||

|

|

0731787518 | ||

|

|

ad95eede10 | ||

|

|

459b0a73ca | ||

|

|

b7870edd2e | ||

|

|

d5703541be | ||

|

|

ba96654e1d | ||

|

|

ac72d623f0 | ||

|

|

fd857985f6 | ||

|

|

966f671efe | ||

|

|

86109ae4b2 | ||

|

|

611fc774c8 | ||

|

|

0ed0b746a4 | ||

|

|

49e68c3a78 | ||

|

|

3ac677c9a7 | ||

|

|

016cfe9e16 | ||

|

|

712ee18634 | ||

|

|

5579c6b3cc | ||

|

|

c3815c56ea | ||

|

|

b257338b0a | ||

|

|

ac70f83879 | ||

|

|

2ad80afa10 | ||

|

|

fe048cd08c | ||

|

|

f9d7ccd83c | ||

|

|

b29884db78 | ||

|

|

a5190234ab | ||

|

|

00db9c9f87 | ||

|

|

02ceb70a4f | ||

|

|

7ce118d645 | ||

|

|

eb397cf4c7 | ||

|

|

f8c9f2da4f | ||

|

|

69d7eacfa4 | ||

|

|

f498aedb96 |

61 changed files with 1405 additions and 3361 deletions

40

.forgejo/workflows/build-on-commit.yml

Normal file

40

.forgejo/workflows/build-on-commit.yml

Normal file

|

|

@ -0,0 +1,40 @@

|

|||

name: Build Docker Image on Commit

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

tags:

|

||||

- '!' # Exclude tags

|

||||

|

||||

jobs:

|

||||

build-and-publish:

|

||||

runs-on: docker-builder

|

||||

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set REPO_VARS

|

||||

id: repo-url

|

||||

run: |

|

||||

echo "REPO_HOST=$(echo "${{ github.server_url }}" | sed 's~http[s]*://~~g')" >> $GITHUB_ENV

|

||||

echo "REPO_PATH=${{ github.repository }}" >> $GITHUB_ENV

|

||||

|

||||

- name: Login to OCI registry

|

||||

run: |

|

||||

echo "${{ secrets.OCI_TOKEN }}" | docker login $REPO_HOST -u "${{ secrets.OCI_USER }}" --password-stdin

|

||||

|

||||

- name: Build and push Docker images

|

||||

run: |

|

||||

# Build Docker image with commit SHA

|

||||

docker build -t $REPO_HOST/$REPO_PATH:${{ github.sha }} .

|

||||

docker push $REPO_HOST/$REPO_PATH:${{ github.sha }}

|

||||

|

||||

# Build Docker image with nightly tag

|

||||

docker tag $REPO_HOST/$REPO_PATH:${{ github.sha }} $REPO_HOST/$REPO_PATH:nightly

|

||||

docker push $REPO_HOST/$REPO_PATH:nightly

|

||||

|

||||

# Remove local images to save storage

|

||||

docker rmi $REPO_HOST/$REPO_PATH:${{ github.sha }}

|

||||

docker rmi $REPO_HOST/$REPO_PATH:nightly

|

||||

37

.forgejo/workflows/build-on-tag.yml

Normal file

37

.forgejo/workflows/build-on-tag.yml

Normal file

|

|

@ -0,0 +1,37 @@

|

|||

name: Build and Publish Docker Image on Tag

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

|

||||

jobs:

|

||||

build-and-publish:

|

||||

runs-on: docker-builder

|

||||

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set REPO_VARS

|

||||

id: repo-url

|

||||

run: |

|

||||

echo "REPO_HOST=$(echo "${{ github.server_url }}" | sed 's~http[s]*://~~g')" >> $GITHUB_ENV

|

||||

echo "REPO_PATH=${{ github.repository }}" >> $GITHUB_ENV

|

||||

|

||||

- name: Login to OCI registry

|

||||

run: |

|

||||

echo "${{ secrets.OCI_TOKEN }}" | docker login $REPO_HOST -u "${{ secrets.OCI_USER }}" --password-stdin

|

||||

|

||||

- name: Build and push Docker image

|

||||

run: |

|

||||

TAG=${{ github.ref_name }} # Get the tag name from the context

|

||||

# Build and push multi-platform Docker images

|

||||

docker build -t $REPO_HOST/$REPO_PATH:$TAG --push .

|

||||

# Tag and push latest

|

||||

docker tag $REPO_HOST/$REPO_PATH:$TAG $REPO_HOST/$REPO_PATH:latest

|

||||

docker push $REPO_HOST/$REPO_PATH:latest

|

||||

|

||||

# Remove the local image to save storage

|

||||

docker rmi $REPO_HOST/$REPO_PATH:$TAG

|

||||

docker rmi $REPO_HOST/$REPO_PATH:latest

|

||||

11

.github/FUNDING.yml

vendored

11

.github/FUNDING.yml

vendored

|

|

@ -1 +1,10 @@

|

|||

custom: https://zerolink.ml/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/help_zeronet/donate/

|

||||

github: canewsin

|

||||

patreon: # Replace with a single Patreon username e.g., user1

|

||||

open_collective: # Replace with a single Open Collective username e.g., user1

|

||||

ko_fi: canewsin

|

||||

tidelift: # Replace with a single Tidelift platform-name/package-name e.g., npm/babel

|

||||

community_bridge: # Replace with a single Community Bridge project-name e.g., cloud-foundry

|

||||

liberapay: canewsin

|

||||

issuehunt: # Replace with a single IssueHunt username e.g., user1

|

||||

otechie: # Replace with a single Otechie username e.g., user1

|

||||

custom: ['https://paypal.me/PramUkesh', 'https://zerolink.ml/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/help_zeronet/donate/']

|

||||

|

|

|

|||

72

.github/workflows/codeql-analysis.yml

vendored

Normal file

72

.github/workflows/codeql-analysis.yml

vendored

Normal file

|

|

@ -0,0 +1,72 @@

|

|||

# For most projects, this workflow file will not need changing; you simply need

|

||||

# to commit it to your repository.

|

||||

#

|

||||

# You may wish to alter this file to override the set of languages analyzed,

|

||||

# or to provide custom queries or build logic.

|

||||

#

|

||||

# ******** NOTE ********

|

||||

# We have attempted to detect the languages in your repository. Please check

|

||||

# the `language` matrix defined below to confirm you have the correct set of

|

||||

# supported CodeQL languages.

|

||||

#

|

||||

name: "CodeQL"

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [ py3-latest ]

|

||||

pull_request:

|

||||

# The branches below must be a subset of the branches above

|

||||

branches: [ py3-latest ]

|

||||

schedule:

|

||||

- cron: '32 19 * * 2'

|

||||

|

||||

jobs:

|

||||

analyze:

|

||||

name: Analyze

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

actions: read

|

||||

contents: read

|

||||

security-events: write

|

||||

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

language: [ 'javascript', 'python' ]

|

||||

# CodeQL supports [ 'cpp', 'csharp', 'go', 'java', 'javascript', 'python', 'ruby' ]

|

||||

# Learn more about CodeQL language support at https://aka.ms/codeql-docs/language-support

|

||||

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v3

|

||||

|

||||

# Initializes the CodeQL tools for scanning.

|

||||

- name: Initialize CodeQL

|

||||

uses: github/codeql-action/init@v2

|

||||

with:

|

||||

languages: ${{ matrix.language }}

|

||||

# If you wish to specify custom queries, you can do so here or in a config file.

|

||||

# By default, queries listed here will override any specified in a config file.

|

||||

# Prefix the list here with "+" to use these queries and those in the config file.

|

||||

|

||||

# Details on CodeQL's query packs refer to : https://docs.github.com/en/code-security/code-scanning/automatically-scanning-your-code-for-vulnerabilities-and-errors/configuring-code-scanning#using-queries-in-ql-packs

|

||||

# queries: security-extended,security-and-quality

|

||||

|

||||

|

||||

# Autobuild attempts to build any compiled languages (C/C++, C#, or Java).

|

||||

# If this step fails, then you should remove it and run the build manually (see below)

|

||||

- name: Autobuild

|

||||

uses: github/codeql-action/autobuild@v2

|

||||

|

||||

# ℹ️ Command-line programs to run using the OS shell.

|

||||

# 📚 See https://docs.github.com/en/actions/using-workflows/workflow-syntax-for-github-actions#jobsjob_idstepsrun

|

||||

|

||||

# If the Autobuild fails above, remove it and uncomment the following three lines.

|

||||

# modify them (or add more) to build your code if your project, please refer to the EXAMPLE below for guidance.

|

||||

|

||||

# - run: |

|

||||

# echo "Run, Build Application using script"

|

||||

# ./location_of_script_within_repo/buildscript.sh

|

||||

|

||||

- name: Perform CodeQL Analysis

|

||||

uses: github/codeql-action/analyze@v2

|

||||

71

.github/workflows/tests.yml

vendored

71

.github/workflows/tests.yml

vendored

|

|

@ -4,49 +4,48 @@ on: [push, pull_request]

|

|||

|

||||

jobs:

|

||||

test:

|

||||

|

||||

runs-on: ubuntu-18.04

|

||||

runs-on: ubuntu-20.04

|

||||

strategy:

|

||||

max-parallel: 16

|

||||

matrix:

|

||||

python-version: [3.6, 3.7, 3.8, 3.9]

|

||||

python-version: ["3.7", "3.8", "3.9"]

|

||||

|

||||

steps:

|

||||

- name: Checkout ZeroNet

|

||||

uses: actions/checkout@v2

|

||||

with:

|

||||

submodules: 'true'

|

||||

- name: Checkout ZeroNet

|

||||

uses: actions/checkout@v2

|

||||

with:

|

||||

submodules: "true"

|

||||

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v1

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v1

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

|

||||

- name: Prepare for installation

|

||||

run: |

|

||||

python3 -m pip install setuptools

|

||||

python3 -m pip install --upgrade pip wheel

|

||||

python3 -m pip install --upgrade codecov coveralls flake8 mock pytest==4.6.3 pytest-cov selenium

|

||||

- name: Prepare for installation

|

||||

run: |

|

||||

python3 -m pip install setuptools

|

||||

python3 -m pip install --upgrade pip wheel

|

||||

python3 -m pip install --upgrade codecov coveralls flake8 mock pytest==4.6.3 pytest-cov selenium

|

||||

|

||||

- name: Install

|

||||

run: |

|

||||

python3 -m pip install --upgrade -r requirements.txt

|

||||

python3 -m pip list

|

||||

- name: Install

|

||||

run: |

|

||||

python3 -m pip install --upgrade -r requirements.txt

|

||||

python3 -m pip list

|

||||

|

||||

- name: Prepare for tests

|

||||

run: |

|

||||

openssl version -a

|

||||

echo 0 | sudo tee /proc/sys/net/ipv6/conf/all/disable_ipv6

|

||||

- name: Prepare for tests

|

||||

run: |

|

||||

openssl version -a

|

||||

echo 0 | sudo tee /proc/sys/net/ipv6/conf/all/disable_ipv6

|

||||

|

||||

- name: Test

|

||||

run: |

|

||||

catchsegv python3 -m pytest src/Test --cov=src --cov-config src/Test/coverage.ini

|

||||

export ZERONET_LOG_DIR="log/CryptMessage"; catchsegv python3 -m pytest -x plugins/CryptMessage/Test

|

||||

export ZERONET_LOG_DIR="log/Bigfile"; catchsegv python3 -m pytest -x plugins/Bigfile/Test

|

||||

export ZERONET_LOG_DIR="log/AnnounceLocal"; catchsegv python3 -m pytest -x plugins/AnnounceLocal/Test

|

||||

export ZERONET_LOG_DIR="log/OptionalManager"; catchsegv python3 -m pytest -x plugins/OptionalManager/Test

|

||||

export ZERONET_LOG_DIR="log/Multiuser"; mv plugins/disabled-Multiuser plugins/Multiuser && catchsegv python -m pytest -x plugins/Multiuser/Test

|

||||

export ZERONET_LOG_DIR="log/Bootstrapper"; mv plugins/disabled-Bootstrapper plugins/Bootstrapper && catchsegv python -m pytest -x plugins/Bootstrapper/Test

|

||||

find src -name "*.json" | xargs -n 1 python3 -c "import json, sys; print(sys.argv[1], end=' '); json.load(open(sys.argv[1])); print('[OK]')"

|

||||

find plugins -name "*.json" | xargs -n 1 python3 -c "import json, sys; print(sys.argv[1], end=' '); json.load(open(sys.argv[1])); print('[OK]')"

|

||||

flake8 . --count --select=E9,F63,F72,F82 --show-source --statistics --exclude=src/lib/pyaes/

|

||||

- name: Test

|

||||

run: |

|

||||

catchsegv python3 -m pytest src/Test --cov=src --cov-config src/Test/coverage.ini

|

||||

export ZERONET_LOG_DIR="log/CryptMessage"; catchsegv python3 -m pytest -x plugins/CryptMessage/Test

|

||||

export ZERONET_LOG_DIR="log/Bigfile"; catchsegv python3 -m pytest -x plugins/Bigfile/Test

|

||||

export ZERONET_LOG_DIR="log/AnnounceLocal"; catchsegv python3 -m pytest -x plugins/AnnounceLocal/Test

|

||||

export ZERONET_LOG_DIR="log/OptionalManager"; catchsegv python3 -m pytest -x plugins/OptionalManager/Test

|

||||

export ZERONET_LOG_DIR="log/Multiuser"; mv plugins/disabled-Multiuser plugins/Multiuser && catchsegv python -m pytest -x plugins/Multiuser/Test

|

||||

export ZERONET_LOG_DIR="log/Bootstrapper"; mv plugins/disabled-Bootstrapper plugins/Bootstrapper && catchsegv python -m pytest -x plugins/Bootstrapper/Test

|

||||

find src -name "*.json" | xargs -n 1 python3 -c "import json, sys; print(sys.argv[1], end=' '); json.load(open(sys.argv[1])); print('[OK]')"

|

||||

find plugins -name "*.json" | xargs -n 1 python3 -c "import json, sys; print(sys.argv[1], end=' '); json.load(open(sys.argv[1])); print('[OK]')"

|

||||

flake8 . --count --select=E9,F63,F72,F82 --show-source --statistics --exclude=src/lib/pyaes/

|

||||

|

|

|

|||

1

.gitignore

vendored

1

.gitignore

vendored

|

|

@ -7,6 +7,7 @@ __pycache__/

|

|||

|

||||

# Hidden files

|

||||

.*

|

||||

!/.forgejo

|

||||

!/.github

|

||||

!/.gitignore

|

||||

!/.travis.yml

|

||||

|

|

|

|||

81

CHANGELOG.md

81

CHANGELOG.md

|

|

@ -1,6 +1,85 @@

|

|||

### ZeroNet 0.7.2 (2020-09-?) Rev4206?

|

||||

### ZeroNet 0.9.0 (2023-07-12) Rev4630

|

||||

- Fix RDos Issue in Plugins https://github.com/ZeroNetX/ZeroNet-Plugins/pull/9

|

||||

- Add trackers to Config.py for failsafety incase missing trackers.txt

|

||||

- Added Proxy links

|

||||

- Fix pysha3 dep installation issue

|

||||

- FileRequest -> Remove Unnecessary check, Fix error wording

|

||||

- Fix Response when site is missing for `actionAs`

|

||||

|

||||

|

||||

### ZeroNet 0.8.5 (2023-02-12) Rev4625

|

||||

- Fix(https://github.com/ZeroNetX/ZeroNet/pull/202) for SSL cert gen failed on Windows.

|

||||

- default theme-class for missing value in `users.json`.

|

||||

- Fetch Stats Plugin changes.

|

||||

|

||||

### ZeroNet 0.8.4 (2022-12-12) Rev4620

|

||||

- Increase Minimum Site size to 25MB.

|

||||

|

||||

### ZeroNet 0.8.3 (2022-12-11) Rev4611

|

||||

- main.py -> Fix accessing unassigned varible

|

||||

- ContentManager -> Support for multiSig

|

||||

- SiteStrorage.py -> Fix accessing unassigned varible

|

||||

- ContentManager.py Improve Logging of Valid Signers

|

||||

|

||||

### ZeroNet 0.8.2 (2022-11-01) Rev4610

|

||||

- Fix Startup Error when plugins dir missing

|

||||

- Move trackers to seperate file & Add more trackers

|

||||

- Config:: Skip loading missing tracker files

|

||||

- Added documentation for getRandomPort fn

|

||||

|

||||

### ZeroNet 0.8.1 (2022-10-01) Rev4600

|

||||

- fix readdress loop (cherry-pick previously added commit from conservancy)

|

||||

- Remove Patreon badge

|

||||

- Update README-ru.md (#177)

|

||||

- Include inner_path of failed request for signing in error msg and response

|

||||

- Don't Fail Silently When Cert is Not Selected

|

||||

- Console Log Updates, Specify min supported ZeroNet version for Rust version Protocol Compatibility

|

||||

- Update FUNDING.yml

|

||||

|

||||

### ZeroNet 0.8.0 (2022-05-27) Rev4591

|

||||

- Revert File Open to catch File Access Errors.

|

||||

|

||||

### ZeroNet 0.7.9-patch (2022-05-26) Rev4586

|

||||

- Use xescape(s) from zeronet-conservancy

|

||||

- actionUpdate response Optimisation

|

||||

- Fetch Plugins Repo Updates

|

||||

- Fix Unhandled File Access Errors

|

||||

- Create codeql-analysis.yml

|

||||

|

||||

### ZeroNet 0.7.9 (2022-05-26) Rev4585

|

||||

- Rust Version Compatibility for update Protocol msg

|

||||

- Removed Non Working Trakers.

|

||||

- Dynamically Load Trackers from Dashboard Site.

|

||||

- Tracker Supply Improvements.

|

||||

- Fix Repo Url for Bug Report

|

||||

- First Party Tracker Update Service using Dashboard Site.

|

||||

- remove old v2 onion service [#158](https://github.com/ZeroNetX/ZeroNet/pull/158)

|

||||

|

||||

### ZeroNet 0.7.8 (2022-03-02) Rev4580

|

||||

- Update Plugins with some bug fixes and Improvements

|

||||

|

||||

### ZeroNet 0.7.6 (2022-01-12) Rev4565

|

||||

- Sync Plugin Updates

|

||||

- Clean up tor v3 patch [#115](https://github.com/ZeroNetX/ZeroNet/pull/115)

|

||||

- Add More Default Plugins to Repo

|

||||

- Doubled Site Publish Limits

|

||||

- Update ZeroNet Repo Urls [#103](https://github.com/ZeroNetX/ZeroNet/pull/103)

|

||||

- UI/UX: Increases Size of Notifications Close Button [#106](https://github.com/ZeroNetX/ZeroNet/pull/106)

|

||||

- Moved Plugins to Seperate Repo

|

||||

- Added `access_key` variable in Config, this used to access restrited plugins when multiuser plugin is enabled. When MultiUserPlugin is enabled we cannot access some pages like /Stats, this key will remove such restriction with access key.

|

||||

- Added `last_connection_id_current_version` to ConnectionServer, helpful to estimate no of connection from current client version.

|

||||

- Added current version: connections to /Stats page. see the previous point.

|

||||

|

||||

### ZeroNet 0.7.5 (2021-11-28) Rev4560

|

||||

- Add more default trackers

|

||||

- Change default homepage address to `1HELLoE3sFD9569CLCbHEAVqvqV7U2Ri9d`

|

||||

- Change default update site address to `1Update8crprmciJHwp2WXqkx2c4iYp18`

|

||||

|

||||

### ZeroNet 0.7.3 (2021-11-28) Rev4555

|

||||

- Fix xrange is undefined error

|

||||

- Fix Incorrect viewport on mobile while loading

|

||||

- Tor-V3 Patch by anonymoose

|

||||

|

||||

|

||||

### ZeroNet 0.7.1 (2019-07-01) Rev4206

|

||||

### Added

|

||||

|

|

|

|||

|

|

@ -1,36 +1,34 @@

|

|||

# Base settings

|

||||

FROM alpine:3.12

|

||||

|

||||

#Base settings

|

||||

ENV HOME /root

|

||||

|

||||

# Install packages

|

||||

|

||||

COPY install-dep-packages.sh /root/install-dep-packages.sh

|

||||

|

||||

RUN /root/install-dep-packages.sh install

|

||||

|

||||

COPY requirements.txt /root/requirements.txt

|

||||

|

||||

RUN pip3 install -r /root/requirements.txt \

|

||||

&& /root/install-dep-packages.sh remove-makedeps \

|

||||

#Install ZeroNet

|

||||

RUN apk --update --no-cache --no-progress add python3 python3-dev gcc libffi-dev musl-dev make tor openssl \

|

||||

&& pip3 install -r /root/requirements.txt \

|

||||

&& apk del python3-dev gcc libffi-dev musl-dev make \

|

||||

&& echo "ControlPort 9051" >> /etc/tor/torrc \

|

||||

&& echo "CookieAuthentication 1" >> /etc/tor/torrc

|

||||

|

||||

|

||||

RUN python3 -V \

|

||||

&& python3 -m pip list \

|

||||

&& tor --version \

|

||||

&& openssl version

|

||||

|

||||

# Add Zeronet source

|

||||

|

||||

#Add Zeronet source

|

||||

COPY . /root

|

||||

VOLUME /root/data

|

||||

|

||||

# Control if Tor proxy is started

|

||||

#Control if Tor proxy is started

|

||||

ENV ENABLE_TOR false

|

||||

|

||||

WORKDIR /root

|

||||

|

||||

# Set upstart command

|

||||

#Set upstart command

|

||||

CMD (! ${ENABLE_TOR} || tor&) && python3 zeronet.py --ui_ip 0.0.0.0 --fileserver_port 26552

|

||||

|

||||

# Expose ports

|

||||

#Expose ports

|

||||

EXPOSE 43110 26552

|

||||

|

||||

243

README-ru.md

243

README-ru.md

|

|

@ -3,206 +3,131 @@

|

|||

[简体中文](./README-zh-cn.md)

|

||||

[English](./README.md)

|

||||

|

||||

Децентрализованные вебсайты использующие Bitcoin криптографию и BitTorrent сеть - https://zeronet.dev

|

||||

|

||||

Децентрализованные вебсайты, использующие криптографию Bitcoin и протокол BitTorrent — https://zeronet.dev ([Зеркало в ZeroNet](http://127.0.0.1:43110/1ZeroNetyV5mKY9JF1gsm82TuBXHpfdLX/)). В отличии от Bitcoin, ZeroNet'у не требуется блокчейн для работы, однако он использует ту же криптографию, чтобы обеспечить сохранность и проверку данных.

|

||||

|

||||

## Зачем?

|

||||

|

||||

* Мы верим в открытую, свободную, и не отцензуренную сеть и коммуникацию.

|

||||

* Нет единой точки отказа: Сайт онлайн пока по крайней мере 1 пир обслуживает его.

|

||||

* Никаких затрат на хостинг: Сайты обслуживаются посетителями.

|

||||

* Невозможно отключить: Он нигде, потому что он везде.

|

||||

* Быстр и работает оффлайн: Вы можете получить доступ к сайту, даже если Интернет недоступен.

|

||||

|

||||

- Мы верим в открытую, свободную, и неподдающуюся цензуре сеть и связь.

|

||||

- Нет единой точки отказа: Сайт остаётся онлайн, пока его обслуживает хотя бы 1 пир.

|

||||

- Нет затрат на хостинг: Сайты обслуживаются посетителями.

|

||||

- Невозможно отключить: Он нигде, потому что он везде.

|

||||

- Скорость и возможность работать без Интернета: Вы сможете получить доступ к сайту, потому что его копия хранится на вашем компьютере и у ваших пиров.

|

||||

|

||||

## Особенности

|

||||

* Обновляемые в реальном времени сайты

|

||||

* Поддержка Namecoin .bit доменов

|

||||

* Лёгок в установке: распаковал & запустил

|

||||

* Клонирование вебсайтов в один клик

|

||||

* Password-less [BIP32](https://github.com/bitcoin/bips/blob/master/bip-0032.mediawiki)

|

||||

based authorization: Ваша учетная запись защищена той же криптографией, что и ваш Bitcoin-кошелек

|

||||

* Встроенный SQL-сервер с синхронизацией данных P2P: Позволяет упростить разработку сайта и ускорить загрузку страницы

|

||||

* Анонимность: Полная поддержка сети Tor с помощью скрытых служб .onion вместо адресов IPv4

|

||||

* TLS зашифрованные связи

|

||||

* Автоматическое открытие uPnP порта

|

||||

* Плагин для поддержки многопользовательской (openproxy)

|

||||

* Работает с любыми браузерами и операционными системами

|

||||

|

||||

- Обновление сайтов в реальном времени

|

||||

- Поддержка доменов `.bit` ([Namecoin](https://www.namecoin.org))

|

||||

- Легкая установка: просто распакуйте и запустите

|

||||

- Клонирование сайтов "в один клик"

|

||||

- Беспарольная [BIP32](https://github.com/bitcoin/bips/blob/master/bip-0032.mediawiki)

|

||||

авторизация: Ваша учетная запись защищена той же криптографией, что и ваш Bitcoin-кошелек

|

||||

- Встроенный SQL-сервер с синхронизацией данных P2P: Позволяет упростить разработку сайта и ускорить загрузку страницы

|

||||

- Анонимность: Полная поддержка сети Tor, используя скрытые службы `.onion` вместо адресов IPv4

|

||||

- Зашифрованное TLS подключение

|

||||

- Автоматическое открытие UPnP–порта

|

||||

- Плагин для поддержки нескольких пользователей (openproxy)

|

||||

- Работа с любыми браузерами и операционными системами

|

||||

|

||||

## Текущие ограничения

|

||||

|

||||

- Файловые транзакции не сжаты

|

||||

- Нет приватных сайтов

|

||||

|

||||

## Как это работает?

|

||||

|

||||

* После запуска `zeronet.py` вы сможете посетить зайты (zeronet сайты) используя адрес

|

||||

`http://127.0.0.1:43110/{zeronet_address}`

|

||||

(например. `http://127.0.0.1:43110/1HELLoE3sFD9569CLCbHEAVqvqV7U2Ri9d`).

|

||||

* Когда вы посещаете новый сайт zeronet, он пытается найти пиров с помощью BitTorrent

|

||||

чтобы загрузить файлы сайтов (html, css, js ...) из них.

|

||||

* Каждый посещенный зайт также обслуживается вами. (Т.е хранится у вас на компьютере)

|

||||

* Каждый сайт содержит файл `content.json`, который содержит все остальные файлы в хэше sha512

|

||||

и подпись, созданную с использованием частного ключа сайта.

|

||||

* Если владелец сайта (у которого есть закрытый ключ для адреса сайта) изменяет сайт, то он/она

|

||||

- После запуска `zeronet.py` вы сможете посещать сайты в ZeroNet, используя адрес

|

||||

`http://127.0.0.1:43110/{zeronet_адрес}`

|

||||

(Например: `http://127.0.0.1:43110/1HELLoE3sFD9569CLCbHEAVqvqV7U2Ri9d`).

|

||||

- Когда вы посещаете новый сайт в ZeroNet, он пытается найти пиров с помощью протокола BitTorrent,

|

||||

чтобы скачать у них файлы сайта (HTML, CSS, JS и т.д.).

|

||||

- После посещения сайта вы тоже становитесь его пиром.

|

||||

- Каждый сайт содержит файл `content.json`, который содержит SHA512 хеши всех остальные файлы

|

||||

и подпись, созданную с помощью закрытого ключа сайта.

|

||||

- Если владелец сайта (тот, кто владеет закрытым ключом для адреса сайта) изменяет сайт, он

|

||||

подписывает новый `content.json` и публикует его для пиров. После этого пиры проверяют целостность `content.json`

|

||||

(используя подпись), они загружают измененные файлы и публикуют новый контент для других пиров.

|

||||

|

||||

#### [Слайд-шоу о криптографии ZeroNet, обновлениях сайтов, многопользовательских сайтах »](https://docs.google.com/presentation/d/1_2qK1IuOKJ51pgBvllZ9Yu7Au2l551t3XBgyTSvilew/pub?start=false&loop=false&delayms=3000)

|

||||

#### [Часто задаваемые вопросы »](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/faq/)

|

||||

|

||||

#### [Документация разработчика ZeroNet »](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/site_development/getting_started/)

|

||||

(используя подпись), скачвают изменённые файлы и распространяют новый контент для других пиров.

|

||||

|

||||

[Презентация о криптографии ZeroNet, обновлениях сайтов, многопользовательских сайтах »](https://docs.google.com/presentation/d/1_2qK1IuOKJ51pgBvllZ9Yu7Au2l551t3XBgyTSvilew/pub?start=false&loop=false&delayms=3000)

|

||||

[Часто задаваемые вопросы »](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/faq/)

|

||||

[Документация разработчика ZeroNet »](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/site_development/getting_started/)

|

||||

|

||||

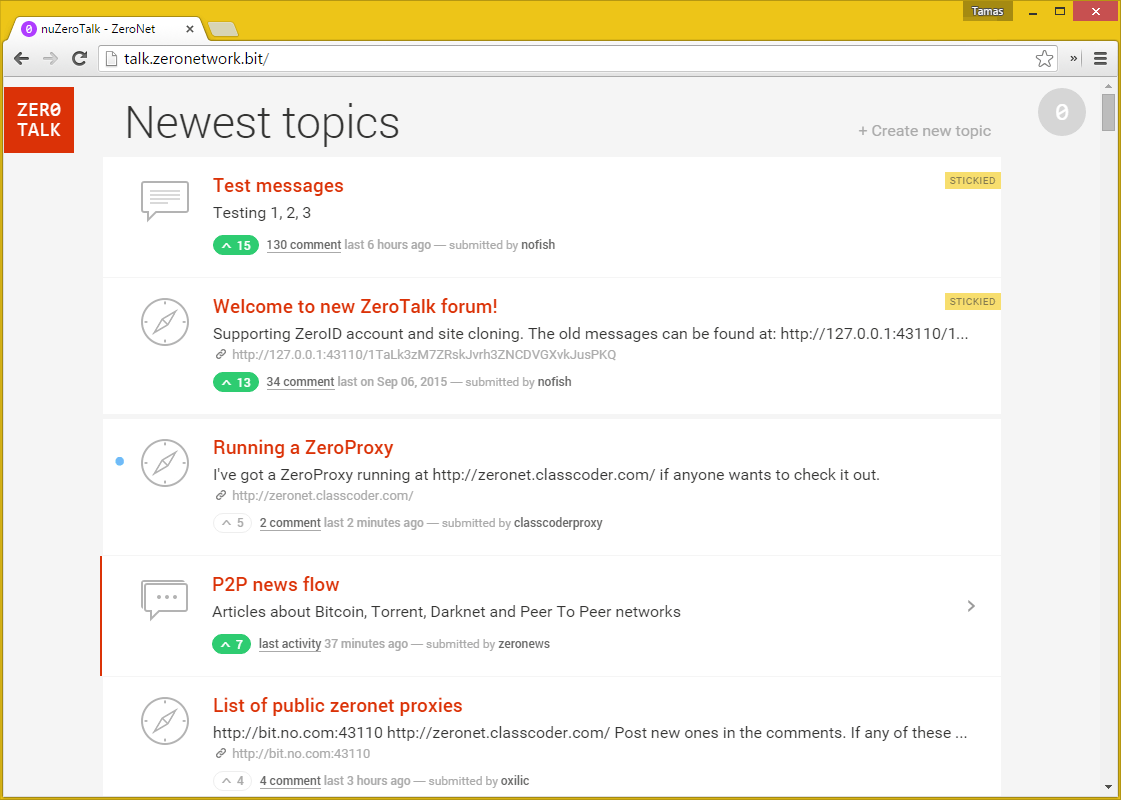

## Скриншоты

|

||||

|

||||

|

||||

|

||||

[Больше скриншотов в документации ZeroNet »](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/using_zeronet/sample_sites/)

|

||||

|

||||

#### [Больше скриншотов в ZeroNet документации »](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/using_zeronet/sample_sites/)

|

||||

## Как присоединиться?

|

||||

|

||||

### Windows

|

||||

|

||||

## Как вступить

|

||||

- Скачайте и распакуйте архив [ZeroNet-win.zip](https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-win.zip) (26МБ)

|

||||

- Запустите `ZeroNet.exe`

|

||||

|

||||

* Скачайте ZeroBundle пакет:

|

||||

* [Microsoft Windows](https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-win.zip)

|

||||

* [Apple macOS](https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-mac.zip)

|

||||

* [Linux 64-bit](https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-linux.zip)

|

||||

* [Linux 32-bit](https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-linux.zip)

|

||||

* Распакуйте где угодно

|

||||

* Запустите `ZeroNet.exe` (win), `ZeroNet(.app)` (osx), `ZeroNet.sh` (linux)

|

||||

### macOS

|

||||

|

||||

### Linux терминал

|

||||

- Скачайте и распакуйте архив [ZeroNet-mac.zip](https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-mac.zip) (14МБ)

|

||||

- Запустите `ZeroNet.app`

|

||||

|

||||

* `wget https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-linux.zip`

|

||||

* `unzip ZeroNet-linux.zip`

|

||||

* `cd ZeroNet-linux`

|

||||

* Запустите с помощью `./ZeroNet.sh`

|

||||

### Linux (64 бит)

|

||||

|

||||

Он загружает последнюю версию ZeroNet, затем запускает её автоматически.

|

||||

- Скачайте и распакуйте архив [ZeroNet-linux.zip](https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-linux.zip) (14МБ)

|

||||

- Запустите `./ZeroNet.sh`

|

||||

|

||||

#### Ручная установка для Debian Linux

|

||||

> **Note**

|

||||

> Запустите таким образом: `./ZeroNet.sh --ui_ip '*' --ui_restrict ваш_ip_адрес`, чтобы разрешить удалённое подключение к веб–интерфейсу.

|

||||

|

||||

* `wget https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-src.zip`

|

||||

* `unzip ZeroNet-src.zip`

|

||||

* `cd ZeroNet`

|

||||

* `sudo apt-get update`

|

||||

* `sudo apt-get install python3-pip`

|

||||

* `sudo python3 -m pip install -r requirements.txt`

|

||||

* Запустите с помощью `python3 zeronet.py`

|

||||

* Откройте http://127.0.0.1:43110/ в вашем браузере.

|

||||

### Docker

|

||||

|

||||

### [Arch Linux](https://www.archlinux.org)

|

||||

Официальный образ находится здесь: https://hub.docker.com/r/canewsin/zeronet/

|

||||

|

||||

* `git clone https://aur.archlinux.org/zeronet.git`

|

||||

* `cd zeronet`

|

||||

* `makepkg -srci`

|

||||

* `systemctl start zeronet`

|

||||

* Откройте http://127.0.0.1:43110/ в вашем браузере.

|

||||

### Android (arm, arm64, x86)

|

||||

|

||||

Смотрите [ArchWiki](https://wiki.archlinux.org)'s [ZeroNet

|

||||

article](https://wiki.archlinux.org/index.php/ZeroNet) для дальнейшей помощи.

|

||||

- Для работы требуется Android как минимум версии 5.0 Lollipop

|

||||

- [<img src="https://play.google.com/intl/en_us/badges/images/generic/en_badge_web_generic.png"

|

||||

alt="Download from Google Play"

|

||||

height="80">](https://play.google.com/store/apps/details?id=in.canews.zeronetmobile)

|

||||

- Скачать APK: https://github.com/canewsin/zeronet_mobile/releases

|

||||

|

||||

### [Gentoo Linux](https://www.gentoo.org)

|

||||

### Android (arm, arm64, x86) Облегчённый клиент только для просмотра (1МБ)

|

||||

|

||||

* [`layman -a raiagent`](https://github.com/leycec/raiagent)

|

||||

* `echo '>=net-vpn/zeronet-0.5.4' >> /etc/portage/package.accept_keywords`

|

||||

* *(Опционально)* Включить поддержку Tor: `echo 'net-vpn/zeronet tor' >>

|

||||

/etc/portage/package.use`

|

||||

* `emerge zeronet`

|

||||

* `rc-service zeronet start`

|

||||

* Откройте http://127.0.0.1:43110/ в вашем браузере.

|

||||

- Для работы требуется Android как минимум версии 4.1 Jelly Bean

|

||||

- [<img src="https://play.google.com/intl/en_us/badges/images/generic/en_badge_web_generic.png"

|

||||

alt="Download from Google Play"

|

||||

height="80">](https://play.google.com/store/apps/details?id=dev.zeronetx.app.lite)

|

||||

|

||||

Смотрите `/usr/share/doc/zeronet-*/README.gentoo.bz2` для дальнейшей помощи.

|

||||

### Установка из исходного кода

|

||||

|

||||

### [FreeBSD](https://www.freebsd.org/)

|

||||

|

||||

* `pkg install zeronet` or `cd /usr/ports/security/zeronet/ && make install clean`

|

||||

* `sysrc zeronet_enable="YES"`

|

||||

* `service zeronet start`

|

||||

* Откройте http://127.0.0.1:43110/ в вашем браузере.

|

||||

|

||||

### [Vagrant](https://www.vagrantup.com/)

|

||||

|

||||

* `vagrant up`

|

||||

* Подключитесь к VM с помощью `vagrant ssh`

|

||||

* `cd /vagrant`

|

||||

* Запустите `python3 zeronet.py --ui_ip 0.0.0.0`

|

||||

* Откройте http://127.0.0.1:43110/ в вашем браузере.

|

||||

|

||||

### [Docker](https://www.docker.com/)

|

||||

* `docker run -d -v <local_data_folder>:/root/data -p 15441:15441 -p 127.0.0.1:43110:43110 canewsin/zeronet`

|

||||

* Это изображение Docker включает в себя прокси-сервер Tor, который по умолчанию отключён.

|

||||

Остерегайтесь что некоторые хостинг-провайдеры могут не позволить вам запускать Tor на своих серверах.

|

||||

Если вы хотите включить его,установите переменную среды `ENABLE_TOR` в` true` (по умолчанию: `false`) Например:

|

||||

|

||||

`docker run -d -e "ENABLE_TOR=true" -v <local_data_folder>:/root/data -p 15441:15441 -p 127.0.0.1:43110:43110 canewsin/zeronet`

|

||||

* Откройте http://127.0.0.1:43110/ в вашем браузере.

|

||||

|

||||

### [Virtualenv](https://virtualenv.readthedocs.org/en/latest/)

|

||||

|

||||

* `virtualenv env`

|

||||

* `source env/bin/activate`

|

||||

* `pip install msgpack gevent`

|

||||

* `python3 zeronet.py`

|

||||

* Откройте http://127.0.0.1:43110/ в вашем браузере.

|

||||

|

||||

## Текущие ограничения

|

||||

|

||||

* Файловые транзакции не сжаты

|

||||

* Нет приватных сайтов

|

||||

|

||||

|

||||

## Как я могу создать сайт в Zeronet?

|

||||

|

||||

Завершите работу zeronet, если он запущен

|

||||

|

||||

```bash

|

||||

$ zeronet.py siteCreate

|

||||

...

|

||||

- Site private key (Приватный ключ сайта): 23DKQpzxhbVBrAtvLEc2uvk7DZweh4qL3fn3jpM3LgHDczMK2TtYUq

|

||||

- Site address (Адрес сайта): 13DNDkMUExRf9Xa9ogwPKqp7zyHFEqbhC2

|

||||

...

|

||||

- Site created! (Сайт создан)

|

||||

$ zeronet.py

|

||||

...

|

||||

```sh

|

||||

wget https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-src.zip

|

||||

unzip ZeroNet-src.zip

|

||||

cd ZeroNet

|

||||

sudo apt-get update

|

||||

sudo apt-get install python3-pip

|

||||

sudo python3 -m pip install -r requirements.txt

|

||||

```

|

||||

- Запустите `python3 zeronet.py`

|

||||

|

||||

Поздравляем, вы закончили! Теперь каждый может получить доступ к вашему зайту используя

|

||||

`http://localhost:43110/13DNDkMUExRf9Xa9ogwPKqp7zyHFEqbhC2`

|

||||

Откройте приветственную страницу ZeroHello в вашем браузере по ссылке http://127.0.0.1:43110/

|

||||

|

||||

Следующие шаги: [ZeroNet Developer Documentation](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/site_development/getting_started/)

|

||||

## Как мне создать сайт в ZeroNet?

|

||||

|

||||

- Кликните на **⋮** > **"Create new, empty site"** в меню на сайте [ZeroHello](http://127.0.0.1:43110/1HELLoE3sFD9569CLCbHEAVqvqV7U2Ri9d).

|

||||

- Вы будете **перенаправлены** на совершенно новый сайт, который может быть изменён только вами!

|

||||

- Вы можете найти и изменить контент вашего сайта в каталоге **data/[адрес_вашего_сайта]**

|

||||

- После изменений откройте ваш сайт, переключите влево кнопку "0" в правом верхнем углу, затем нажмите кнопки **sign** и **publish** внизу

|

||||

|

||||

## Как я могу модифицировать Zeronet сайт?

|

||||

|

||||

* Измените файлы расположенные в data/13DNDkMUExRf9Xa9ogwPKqp7zyHFEqbhC2 директории.

|

||||

Когда закончите с изменением:

|

||||

|

||||

```bash

|

||||

$ zeronet.py siteSign 13DNDkMUExRf9Xa9ogwPKqp7zyHFEqbhC2

|

||||

- Signing site (Подпись сайта): 13DNDkMUExRf9Xa9ogwPKqp7zyHFEqbhC2...

|

||||

Private key (Приватный ключ) (input hidden):

|

||||

```

|

||||

|

||||

* Введите секретный ключ, который вы получили при создании сайта, потом:

|

||||

|

||||

```bash

|

||||

$ zeronet.py sitePublish 13DNDkMUExRf9Xa9ogwPKqp7zyHFEqbhC2

|

||||

...

|

||||

Site:13DNDk..bhC2 Publishing to 3/10 peers...

|

||||

Site:13DNDk..bhC2 Successfuly published to 3 peers

|

||||

- Serving files....

|

||||

```

|

||||

|

||||

* Вот и всё! Вы успешно подписали и опубликовали свои изменения.

|

||||

|

||||

Следующие шаги: [Документация разработчика ZeroNet](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/site_development/getting_started/)

|

||||

|

||||

## Поддержите проект

|

||||

- Bitcoin: 1ZeroNetyV5mKY9JF1gsm82TuBXHpfdLX (Preferred)

|

||||

|

||||

- Bitcoin: 1ZeroNetyV5mKY9JF1gsm82TuBXHpfdLX (Рекомендуем)

|

||||

- LiberaPay: https://liberapay.com/PramUkesh

|

||||

- Paypal: https://paypal.me/PramUkesh

|

||||

- Others: [Donate](!https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/help_zeronet/donate/#help-to-keep-zeronet-development-alive)

|

||||

|

||||

- Другие способы: [Donate](!https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/help_zeronet/donate/#help-to-keep-zeronet-development-alive)

|

||||

|

||||

#### Спасибо!

|

||||

|

||||

* Больше информации, помощь, журнал изменений, zeronet сайты: https://www.reddit.com/r/zeronetx/

|

||||

* Приходите, пообщайтесь с нами: [#zeronet @ FreeNode](https://kiwiirc.com/client/irc.freenode.net/zeronet) или на [gitter](https://gitter.im/canewsin/ZeroNet)

|

||||

* Email: canews.in@gmail.com

|

||||

- Здесь вы можете получить больше информации, помощь, прочитать список изменений и исследовать ZeroNet сайты: https://www.reddit.com/r/zeronetx/

|

||||

- Общение происходит на канале [#zeronet @ FreeNode](https://kiwiirc.com/client/irc.freenode.net/zeronet) или в [Gitter](https://gitter.im/canewsin/ZeroNet)

|

||||

- Электронная почта: canews.in@gmail.com

|

||||

|

|

|

|||

19

README.md

19

README.md

|

|

@ -1,5 +1,4 @@

|

|||

# ZeroNet [](https://github.com/ZeroNetX/ZeroNet/actions/workflows/tests.yml) [](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/faq/) [](https://docs.zeronet.dev/1DeveLopDZL1cHfKi8UXHh2UBEhzH6HhMp/help_zeronet/donate/) [](https://hub.docker.com/r/canewsin/zeronet)

|

||||

|

||||

<!--TODO: Update Onion Site -->

|

||||

Decentralized websites using Bitcoin crypto and the BitTorrent network - https://zeronet.dev / [ZeroNet Site](http://127.0.0.1:43110/1ZeroNetyV5mKY9JF1gsm82TuBXHpfdLX/), Unlike Bitcoin, ZeroNet Doesn't need a blockchain to run, But uses cryptography used by BTC, to ensure data integrity and validation.

|

||||

|

||||

|

|

@ -100,6 +99,24 @@ Decentralized websites using Bitcoin crypto and the BitTorrent network - https:/

|

|||

#### Docker

|

||||

There is an official image, built from source at: https://hub.docker.com/r/canewsin/zeronet/

|

||||

|

||||

### Online Proxies

|

||||

Proxies are like seed boxes for sites(i.e ZNX runs on a cloud vps), you can try zeronet experience from proxies. Add your proxy below if you have one.

|

||||

|

||||

#### Official ZNX Proxy :

|

||||

|

||||

https://proxy.zeronet.dev/

|

||||

|

||||

https://zeronet.dev/

|

||||

|

||||

#### From Community

|

||||

|

||||

https://0net-preview.com/

|

||||

|

||||

https://portal.ngnoid.tv/

|

||||

|

||||

https://zeronet.ipfsscan.io/

|

||||

|

||||

|

||||

### Install from source

|

||||

|

||||

- `wget https://github.com/ZeroNetX/ZeroNet/releases/latest/download/ZeroNet-src.zip`

|

||||

|

|

|

|||

|

|

@ -1,32 +0,0 @@

|

|||

#!/bin/sh

|

||||

set -e

|

||||

|

||||

arg_push=

|

||||

|

||||

case "$1" in

|

||||

--push) arg_push=y ; shift ;;

|

||||

esac

|

||||

|

||||

default_suffix=alpine

|

||||

prefix="${1:-local/}"

|

||||

|

||||

for dokerfile in dockerfiles/Dockerfile.* ; do

|

||||

suffix="`echo "$dokerfile" | sed 's/.*\/Dockerfile\.//'`"

|

||||

image_name="${prefix}zeronet:$suffix"

|

||||

|

||||

latest=""

|

||||

t_latest=""

|

||||

if [ "$suffix" = "$default_suffix" ] ; then

|

||||

latest="${prefix}zeronet:latest"

|

||||

t_latest="-t ${latest}"

|

||||

fi

|

||||

|

||||

echo "DOCKER BUILD $image_name"

|

||||

docker build -f "$dokerfile" -t "$image_name" $t_latest .

|

||||

if [ -n "$arg_push" ] ; then

|

||||

docker push "$image_name"

|

||||

if [ -n "$latest" ] ; then

|

||||

docker push "$latest"

|

||||

fi

|

||||

fi

|

||||

done

|

||||

|

|

@ -1 +0,0 @@

|

|||

Dockerfile.alpine3.13

|

||||

|

|

@ -1,44 +0,0 @@

|

|||

# THIS FILE IS AUTOGENERATED BY gen-dockerfiles.sh.

|

||||

# SEE zeronet-Dockerfile FOR THE SOURCE FILE.

|

||||

|

||||

FROM alpine:3.13

|

||||

|

||||

# Base settings

|

||||

ENV HOME /root

|

||||

|

||||

# Install packages

|

||||

|

||||

# Install packages

|

||||

|

||||

COPY install-dep-packages.sh /root/install-dep-packages.sh

|

||||

|

||||

RUN /root/install-dep-packages.sh install

|

||||

|

||||

COPY requirements.txt /root/requirements.txt

|

||||

|

||||

RUN pip3 install -r /root/requirements.txt \

|

||||

&& /root/install-dep-packages.sh remove-makedeps \

|

||||

&& echo "ControlPort 9051" >> /etc/tor/torrc \

|

||||

&& echo "CookieAuthentication 1" >> /etc/tor/torrc

|

||||

|

||||

RUN python3 -V \

|

||||

&& python3 -m pip list \

|

||||

&& tor --version \

|

||||

&& openssl version

|

||||

|

||||

# Add Zeronet source

|

||||

|

||||

COPY . /root

|

||||

VOLUME /root/data

|

||||

|

||||

# Control if Tor proxy is started

|

||||

ENV ENABLE_TOR false

|

||||

|

||||

WORKDIR /root

|

||||

|

||||

# Set upstart command

|

||||

CMD (! ${ENABLE_TOR} || tor&) && python3 zeronet.py --ui_ip 0.0.0.0 --fileserver_port 26552

|

||||

|

||||

# Expose ports

|

||||

EXPOSE 43110 26552

|

||||

|

||||

|

|

@ -1 +0,0 @@

|

|||

Dockerfile.ubuntu20.04

|

||||

|

|

@ -1,44 +0,0 @@

|

|||

# THIS FILE IS AUTOGENERATED BY gen-dockerfiles.sh.

|

||||

# SEE zeronet-Dockerfile FOR THE SOURCE FILE.

|

||||

|

||||

FROM ubuntu:20.04

|

||||

|

||||

# Base settings

|

||||

ENV HOME /root

|

||||

|

||||

# Install packages

|

||||

|

||||

# Install packages

|

||||

|

||||

COPY install-dep-packages.sh /root/install-dep-packages.sh

|

||||

|

||||

RUN /root/install-dep-packages.sh install

|

||||

|

||||

COPY requirements.txt /root/requirements.txt

|

||||

|

||||

RUN pip3 install -r /root/requirements.txt \

|

||||

&& /root/install-dep-packages.sh remove-makedeps \

|

||||

&& echo "ControlPort 9051" >> /etc/tor/torrc \

|

||||

&& echo "CookieAuthentication 1" >> /etc/tor/torrc

|

||||

|

||||

RUN python3 -V \

|

||||

&& python3 -m pip list \

|

||||

&& tor --version \

|

||||

&& openssl version

|

||||

|

||||

# Add Zeronet source

|

||||

|

||||

COPY . /root

|

||||

VOLUME /root/data

|

||||

|

||||

# Control if Tor proxy is started

|

||||

ENV ENABLE_TOR false

|

||||

|

||||

WORKDIR /root

|

||||

|

||||

# Set upstart command

|

||||

CMD (! ${ENABLE_TOR} || tor&) && python3 zeronet.py --ui_ip 0.0.0.0 --fileserver_port 26552

|

||||

|

||||

# Expose ports

|

||||

EXPOSE 43110 26552

|

||||

|

||||

|

|

@ -1,34 +0,0 @@

|

|||

#!/bin/sh

|

||||

|

||||

set -e

|

||||

|

||||

die() {

|

||||

echo "$@" > /dev/stderr

|

||||

exit 1

|

||||

}

|

||||

|

||||

for os in alpine:3.13 ubuntu:20.04 ; do

|

||||

prefix="`echo "$os" | sed -e 's/://'`"

|

||||

short_prefix="`echo "$os" | sed -e 's/:.*//'`"

|

||||

|

||||

zeronet="zeronet-Dockerfile"

|

||||

|

||||

dockerfile="Dockerfile.$prefix"

|

||||

dockerfile_short="Dockerfile.$short_prefix"

|

||||

|

||||

echo "GEN $dockerfile"

|

||||

|

||||

if ! test -f "$zeronet" ; then

|

||||

die "No such file: $zeronet"

|

||||

fi

|

||||

|

||||

echo "\

|

||||

# THIS FILE IS AUTOGENERATED BY gen-dockerfiles.sh.

|

||||

# SEE $zeronet FOR THE SOURCE FILE.

|

||||

|

||||

FROM $os

|

||||

|

||||

`cat "$zeronet"`

|

||||

" > "$dockerfile.tmp" && mv "$dockerfile.tmp" "$dockerfile" && ln -s -f "$dockerfile" "$dockerfile_short"

|

||||

done

|

||||

|

||||

|

|

@ -1,49 +0,0 @@

|

|||

#!/bin/sh

|

||||

set -e

|

||||

|

||||

do_alpine() {

|

||||

local deps="python3 py3-pip openssl tor"

|

||||

local makedeps="python3-dev gcc g++ libffi-dev musl-dev make automake autoconf libtool"

|

||||

|

||||

case "$1" in

|

||||

install)

|

||||

apk --update --no-cache --no-progress add $deps $makedeps

|

||||

;;

|

||||

remove-makedeps)

|

||||

apk del $makedeps

|

||||

;;

|

||||

esac

|

||||

}

|

||||

|

||||

do_ubuntu() {

|

||||

local deps="python3 python3-pip openssl tor"

|

||||

local makedeps="python3-dev gcc g++ libffi-dev make automake autoconf libtool"

|

||||

|

||||

case "$1" in

|

||||

install)

|

||||

apt-get update && \

|

||||

apt-get install --no-install-recommends -y $deps $makedeps && \

|

||||

rm -rf /var/lib/apt/lists/*

|

||||

;;

|

||||

remove-makedeps)

|

||||

apt-get remove -y $makedeps

|

||||

;;

|

||||

esac

|

||||

}

|

||||

|

||||

if test -f /etc/os-release ; then

|

||||

. /etc/os-release

|

||||

elif test -f /usr/lib/os-release ; then

|

||||

. /usr/lib/os-release

|

||||

else

|

||||

echo "No such file: /etc/os-release" > /dev/stderr

|

||||

exit 1

|

||||

fi

|

||||

|

||||

case "$ID" in

|

||||

ubuntu) do_ubuntu "$@" ;;

|

||||

alpine) do_alpine "$@" ;;

|

||||

*)

|

||||

echo "Unsupported OS ID: $ID" > /dev/stderr

|

||||

exit 1

|

||||

esac

|

||||

1

plugins

Submodule

1

plugins

Submodule

|

|

@ -0,0 +1 @@

|

|||

Subproject commit 689d9309f73371f4681191b125ec3f2e14075eeb

|

||||

|

|

@ -3,7 +3,7 @@ greenlet==0.4.16; python_version <= "3.6"

|

|||

gevent>=20.9.0; python_version >= "3.7"

|

||||

msgpack>=0.4.4

|

||||

base58

|

||||

merkletools

|

||||

merkletools @ git+https://github.com/ZeroNetX/pymerkletools.git@dev

|

||||

rsa

|

||||

PySocks>=1.6.8

|

||||

pyasn1

|

||||

|

|

|

|||

|

|

@ -13,8 +13,8 @@ import time

|

|||

class Config(object):

|

||||

|

||||

def __init__(self, argv):

|

||||

self.version = "0.7.6"

|

||||

self.rev = 4565

|

||||

self.version = "0.9.0"

|

||||

self.rev = 4630

|

||||

self.argv = argv

|

||||

self.action = None

|

||||

self.test_parser = None

|

||||

|

|

@ -82,45 +82,12 @@ class Config(object):

|

|||

from Crypt import CryptHash

|

||||

access_key_default = CryptHash.random(24, "base64") # Used to allow restrited plugins when multiuser plugin is enabled

|

||||

trackers = [

|

||||

# by zeroseed at http://127.0.0.1:43110/19HKdTAeBh5nRiKn791czY7TwRB1QNrf1Q/?:users/1HvNGwHKqhj3ZMEM53tz6jbdqe4LRpanEu:zn:dc17f896-bf3f-4962-bdd4-0a470040c9c5

|

||||

"zero://k5w77dozo3hy5zualyhni6vrh73iwfkaofa64abbilwyhhd3wgenbjqd.onion:15441",

|

||||

"zero://2kcb2fqesyaevc4lntogupa4mkdssth2ypfwczd2ov5a3zo6ytwwbayd.onion:15441",

|

||||

"zero://my562dxpjropcd5hy3nd5pemsc4aavbiptci5amwxzbelmzgkkuxpvid.onion:15441",

|

||||

"zero://pn4q2zzt2pw4nk7yidxvsxmydko7dfibuzxdswi6gu6ninjpofvqs2id.onion:15441",

|

||||

"zero://6i54dd5th73oelv636ivix6sjnwfgk2qsltnyvswagwphub375t3xcad.onion:15441",

|

||||

"zero://tl74auz4tyqv4bieeclmyoe4uwtoc2dj7fdqv4nc4gl5j2bwg2r26bqd.onion:15441",

|

||||

"zero://wlxav3szbrdhest4j7dib2vgbrd7uj7u7rnuzg22cxbih7yxyg2hsmid.onion:15441",

|

||||

"zero://zy7wttvjtsijt5uwmlar4yguvjc2gppzbdj4v6bujng6xwjmkdg7uvqd.onion:15441",

|

||||

|

||||

# ZeroNet 0.7.2 defaults:

|

||||

"zero://boot3rdez4rzn36x.onion:15441",

|

||||

"http://open.acgnxtracker.com:80/announce", # DE

|

||||

"http://tracker.bt4g.com:2095/announce", # Cloudflare

|

||||

"zero://2602:ffc5::c5b2:5360:26312", # US/ATL

|

||||

"zero://145.239.95.38:15441",

|

||||

"zero://188.116.183.41:26552",

|

||||

"zero://145.239.95.38:15441",

|

||||

"zero://211.125.90.79:22234",

|

||||

"zero://216.189.144.82:26312",

|

||||

"zero://45.77.23.92:15555",

|

||||

"zero://51.15.54.182:21041",

|

||||

"http://tracker.files.fm:6969/announce",

|

||||

"http://t.publictracker.xyz:6969/announce",

|

||||

"https://tracker.lilithraws.cf:443/announce",

|

||||

"udp://code2chicken.nl:6969/announce",

|

||||

"udp://abufinzio.monocul.us:6969/announce",

|

||||

"udp://tracker.0x.tf:6969/announce",

|

||||

"udp://tracker.zerobytes.xyz:1337/announce",

|

||||

"udp://vibe.sleepyinternetfun.xyz:1738/announce",

|

||||

"udp://www.torrent.eu.org:451/announce",

|

||||

"zero://k5w77dozo3hy5zualyhni6vrh73iwfkaofa64abbilwyhhd3wgenbjqd.onion:15441",

|

||||

"zero://2kcb2fqesyaevc4lntogupa4mkdssth2ypfwczd2ov5a3zo6ytwwbayd.onion:15441",

|

||||

"zero://gugt43coc5tkyrhrc3esf6t6aeycvcqzw7qafxrjpqbwt4ssz5czgzyd.onion:15441",

|

||||

"zero://hb6ozikfiaafeuqvgseiik4r46szbpjfu66l67wjinnyv6dtopuwhtqd.onion:15445",

|

||||

"zero://75pmmcbp4vvo2zndmjnrkandvbg6jyptygvvpwsf2zguj7urq7t4jzyd.onion:7777",

|

||||

"zero://dw4f4sckg2ultdj5qu7vtkf3jsfxsah3mz6pivwfd6nv3quji3vfvhyd.onion:6969",

|

||||

"zero://5vczpwawviukvd7grfhsfxp7a6huz77hlis4fstjkym5kmf4pu7i7myd.onion:15441",

|

||||

"zero://ow7in4ftwsix5klcbdfqvfqjvimqshbm2o75rhtpdnsderrcbx74wbad.onion:15441",

|

||||

"zero://agufghdtniyfwty3wk55drxxwj2zxgzzo7dbrtje73gmvcpxy4ngs4ad.onion:15441",

|

||||

"zero://qn65si4gtcwdiliq7vzrwu62qrweoxb6tx2cchwslaervj6szuje66qd.onion:26117",

|

||||

"https://tracker.babico.name.tr:443/announce",

|

||||

]

|

||||

# Platform specific

|

||||

if sys.platform.startswith("win"):

|

||||

|

|

@ -284,31 +251,12 @@ class Config(object):

|

|||

self.parser.add_argument('--access_key', help='Plugin access key default: Random key generated at startup', default=access_key_default, metavar='key')

|

||||

self.parser.add_argument('--dist_type', help='Type of installed distribution', default='source')

|

||||

|

||||

self.parser.add_argument('--size_limit', help='Default site size limit in MB', default=10, type=int, metavar='limit')

|

||||

self.parser.add_argument('--size_limit', help='Default site size limit in MB', default=25, type=int, metavar='limit')

|

||||

self.parser.add_argument('--file_size_limit', help='Maximum per file size limit in MB', default=10, type=int, metavar='limit')

|

||||

self.parser.add_argument('--connected_limit', help='Max number of connected peers per site. Soft limit.', default=10, type=int, metavar='connected_limit')

|

||||

self.parser.add_argument('--global_connected_limit', help='Max number of connections. Soft limit.', default=512, type=int, metavar='global_connected_limit')

|

||||

self.parser.add_argument('--connected_limit', help='Max connected peer per site', default=8, type=int, metavar='connected_limit')

|

||||

self.parser.add_argument('--global_connected_limit', help='Max connections', default=512, type=int, metavar='global_connected_limit')

|

||||

self.parser.add_argument('--workers', help='Download workers per site', default=5, type=int, metavar='workers')

|

||||

|

||||

self.parser.add_argument('--site_announce_interval_min', help='Site announce interval for the most active sites, in minutes.', default=4, type=int, metavar='site_announce_interval_min')

|

||||

self.parser.add_argument('--site_announce_interval_max', help='Site announce interval for inactive sites, in minutes.', default=30, type=int, metavar='site_announce_interval_max')

|

||||

|

||||

self.parser.add_argument('--site_peer_check_interval_min', help='Connectable peers check interval for the most active sites, in minutes.', default=5, type=int, metavar='site_peer_check_interval_min')

|

||||

self.parser.add_argument('--site_peer_check_interval_max', help='Connectable peers check interval for inactive sites, in minutes.', default=20, type=int, metavar='site_peer_check_interval_max')

|

||||

|

||||

self.parser.add_argument('--site_update_check_interval_min', help='Site update check interval for the most active sites, in minutes.', default=5, type=int, metavar='site_update_check_interval_min')

|

||||

self.parser.add_argument('--site_update_check_interval_max', help='Site update check interval for inactive sites, in minutes.', default=45, type=int, metavar='site_update_check_interval_max')

|

||||

|

||||

self.parser.add_argument('--site_connectable_peer_count_max', help='Search for as many connectable peers for the most active sites', default=10, type=int, metavar='site_connectable_peer_count_max')

|

||||

self.parser.add_argument('--site_connectable_peer_count_min', help='Search for as many connectable peers for inactive sites', default=2, type=int, metavar='site_connectable_peer_count_min')

|

||||

|

||||

self.parser.add_argument('--send_back_lru_size', help='Size of the send back LRU cache', default=5000, type=int, metavar='send_back_lru_size')

|

||||

self.parser.add_argument('--send_back_limit', help='Send no more than so many files at once back to peer, when we discovered that the peer held older file versions', default=3, type=int, metavar='send_back_limit')

|

||||

|

||||

self.parser.add_argument('--expose_no_ownership', help='By default, ZeroNet tries checking updates for own sites more frequently. This can be used by a third party for revealing the network addresses of a site owner. If this option is enabled, ZeroNet performs the checks in the same way for any sites.', type='bool', choices=[True, False], default=False)

|

||||

|

||||

self.parser.add_argument('--simultaneous_connection_throttle_threshold', help='Throttle opening new connections when the number of outgoing connections in not fully established state exceeds the threshold.', default=15, type=int, metavar='simultaneous_connection_throttle_threshold')

|

||||

|

||||

self.parser.add_argument('--fileserver_ip', help='FileServer bind address', default="*", metavar='ip')

|

||||

self.parser.add_argument('--fileserver_port', help='FileServer bind port (0: randomize)', default=0, type=int, metavar='port')

|

||||

self.parser.add_argument('--fileserver_port_range', help='FileServer randomization range', default="10000-40000", metavar='port')

|

||||

|

|

@ -371,8 +319,7 @@ class Config(object):

|

|||

|

||||

def loadTrackersFile(self):

|

||||

if not self.trackers_file:

|

||||

return None

|

||||

|

||||

self.trackers_file = ["trackers.txt", "{data_dir}/1HELLoE3sFD9569CLCbHEAVqvqV7U2Ri9d/trackers.txt"]

|

||||

self.trackers = self.arguments.trackers[:]

|

||||

|

||||

for trackers_file in self.trackers_file:

|

||||

|

|

@ -384,6 +331,9 @@ class Config(object):

|

|||

else: # Relative to zeronet.py

|

||||

trackers_file_path = self.start_dir + "/" + trackers_file

|

||||

|

||||

if not os.path.exists(trackers_file_path):

|

||||

continue

|

||||

|

||||

for line in open(trackers_file_path):

|

||||

tracker = line.strip()

|

||||

if "://" in tracker and tracker not in self.trackers:

|

||||

|

|

|

|||

|

|

@ -17,13 +17,12 @@ from util import helper

|

|||

class Connection(object):

|

||||

__slots__ = (

|

||||

"sock", "sock_wrapped", "ip", "port", "cert_pin", "target_onion", "id", "protocol", "type", "server", "unpacker", "unpacker_bytes", "req_id", "ip_type",

|

||||

"handshake", "crypt", "connected", "connecting", "event_connected", "closed", "start_time", "handshake_time", "last_recv_time", "is_private_ip", "is_tracker_connection",

|

||||

"handshake", "crypt", "connected", "event_connected", "closed", "start_time", "handshake_time", "last_recv_time", "is_private_ip", "is_tracker_connection",

|

||||

"last_message_time", "last_send_time", "last_sent_time", "incomplete_buff_recv", "bytes_recv", "bytes_sent", "cpu_time", "send_lock",

|

||||

"last_ping_delay", "last_req_time", "last_cmd_sent", "last_cmd_recv", "bad_actions", "sites", "name", "waiting_requests", "waiting_streams"

|

||||

)

|

||||

|

||||

def __init__(self, server, ip, port, sock=None, target_onion=None, is_tracker_connection=False):

|

||||

self.server = server

|

||||

self.sock = sock

|

||||

self.cert_pin = None

|

||||

if "#" in ip:

|

||||

|

|

@ -43,6 +42,7 @@ class Connection(object):

|

|||

self.is_private_ip = False

|

||||

self.is_tracker_connection = is_tracker_connection

|

||||

|

||||

self.server = server

|

||||

self.unpacker = None # Stream incoming socket messages here

|

||||

self.unpacker_bytes = 0 # How many bytes the unpacker received

|

||||

self.req_id = 0 # Last request id

|

||||

|

|

@ -50,7 +50,6 @@ class Connection(object):

|

|||

self.crypt = None # Connection encryption method

|

||||

self.sock_wrapped = False # Socket wrapped to encryption

|

||||

|

||||

self.connecting = False

|

||||

self.connected = False

|

||||

self.event_connected = gevent.event.AsyncResult() # Solves on handshake received

|

||||

self.closed = False

|

||||

|

|

@ -82,11 +81,11 @@ class Connection(object):

|

|||

|

||||

def setIp(self, ip):

|

||||

self.ip = ip

|

||||

self.ip_type = self.server.getIpType(ip)

|

||||

self.ip_type = helper.getIpType(ip)

|

||||

self.updateName()

|

||||

|

||||

def createSocket(self):

|

||||

if self.server.getIpType(self.ip) == "ipv6" and not hasattr(socket, "socket_noproxy"):

|

||||

if helper.getIpType(self.ip) == "ipv6" and not hasattr(socket, "socket_noproxy"):

|

||||

# Create IPv6 connection as IPv4 when using proxy

|

||||

return socket.socket(socket.AF_INET6, socket.SOCK_STREAM)

|

||||

else:

|

||||

|

|

@ -119,28 +118,13 @@ class Connection(object):

|

|||

|

||||

# Open connection to peer and wait for handshake

|

||||

def connect(self):

|

||||

self.connecting = True

|

||||

try:

|

||||

return self._connect()

|

||||

except Exception as err:

|

||||

self.connecting = False

|

||||

self.connected = False

|

||||

raise

|

||||

|

||||

def _connect(self):

|

||||

self.updateOnlineStatus(outgoing_activity=True)

|

||||

|

||||

if not self.event_connected or self.event_connected.ready():

|

||||

self.event_connected = gevent.event.AsyncResult()

|

||||

|

||||

self.type = "out"

|

||||

|

||||

unreachability = self.server.getIpUnreachability(self.ip)

|

||||

if unreachability:

|

||||

raise Exception(unreachability)

|

||||

|

||||

if self.ip_type == "onion":

|

||||

if not self.server.tor_manager or not self.server.tor_manager.enabled:

|

||||

raise Exception("Can't connect to onion addresses, no Tor controller present")

|

||||

self.sock = self.server.tor_manager.createSocket(self.ip, self.port)

|

||||

elif config.tor == "always" and helper.isPrivateIp(self.ip) and self.ip not in config.ip_local:

|

||||

raise Exception("Can't connect to local IPs in Tor: always mode")

|

||||

elif config.trackers_proxy != "disable" and config.tor != "always" and self.is_tracker_connection:

|

||||

if config.trackers_proxy == "tor":

|

||||

self.sock = self.server.tor_manager.createSocket(self.ip, self.port)

|

||||

|

|

@ -164,56 +148,37 @@ class Connection(object):

|

|||

|

||||

self.sock.connect(sock_address)

|

||||

|

||||

if self.shouldEncrypt():

|

||||

# Implicit SSL

|

||||

should_encrypt = not self.ip_type == "onion" and self.ip not in self.server.broken_ssl_ips and self.ip not in config.ip_local

|

||||

if self.cert_pin:

|

||||

self.sock = CryptConnection.manager.wrapSocket(self.sock, "tls-rsa", cert_pin=self.cert_pin)

|

||||

self.sock.do_handshake()

|

||||

self.crypt = "tls-rsa"

|

||||

self.sock_wrapped = True

|

||||

elif should_encrypt and "tls-rsa" in CryptConnection.manager.crypt_supported:

|

||||

try:

|

||||

self.wrapSocket()

|

||||

self.sock = CryptConnection.manager.wrapSocket(self.sock, "tls-rsa")

|

||||

self.sock.do_handshake()

|

||||

self.crypt = "tls-rsa"

|

||||

self.sock_wrapped = True

|

||||

except Exception as err:

|

||||

if self.sock:

|

||||

self.sock.close()

|

||||

self.sock = None

|

||||

if self.mustEncrypt():

|

||||

raise

|

||||

self.log("Crypt connection error, adding %s:%s as broken ssl. %s" % (self.ip, self.port, Debug.formatException(err)))

|

||||

self.server.broken_ssl_ips[self.ip] = True

|

||||

return self.connect()

|

||||

if not config.force_encryption:

|

||||

self.log("Crypt connection error, adding %s:%s as broken ssl. %s" % (self.ip, self.port, Debug.formatException(err)))

|

||||

self.server.broken_ssl_ips[self.ip] = True

|

||||

self.sock.close()

|

||||

self.crypt = None

|

||||

self.sock = self.createSocket()